Welcome to the 76th edition of The Week in Polls, which finds me muttering left, right and centre at over-interpretations all around of the party conference season given how limited the evidence is that they move the voting intention polls. Very, very limited.1

So instead I’m going to look at a fuss over a pollster who put some, let’s say, highly contentious claims in one of their polls. Plus as it’s a new quarter, there are bonus council by-election statistics.

Then it’s a look at the latest voting intention polls followed by, for paid-for subscribers, 10 insights from the last week’s polling and analysis. (If you’re a free subscriber, sign up for a free trial here to see what you’re missing.)

As ever, if you have any feedback or questions prompted by what follows, or spotted some other recent polling you’d like to see covered, just hit reply. I personally read every response.

Before all that, a word of thanks to the MRP team at Stonehaven who kindly took the time to discuss the details of their polling approach during the week. Last time I mentioned the eyebrows raised by some over their apparent approach to sample sizes. But this seems to be more an issue of unfortunate wording on their website as they make use of previous data too; it’s just that their freshest data is smaller in size than you’d expect for an MPR overall.

Been forwarded this email by someone else? Sign up to get your own copy here.

Want to know more about political polling? Get my book here.

Or for more news about the Lib Dems specifically, get the free monthly Liberal Democrat Newswire.

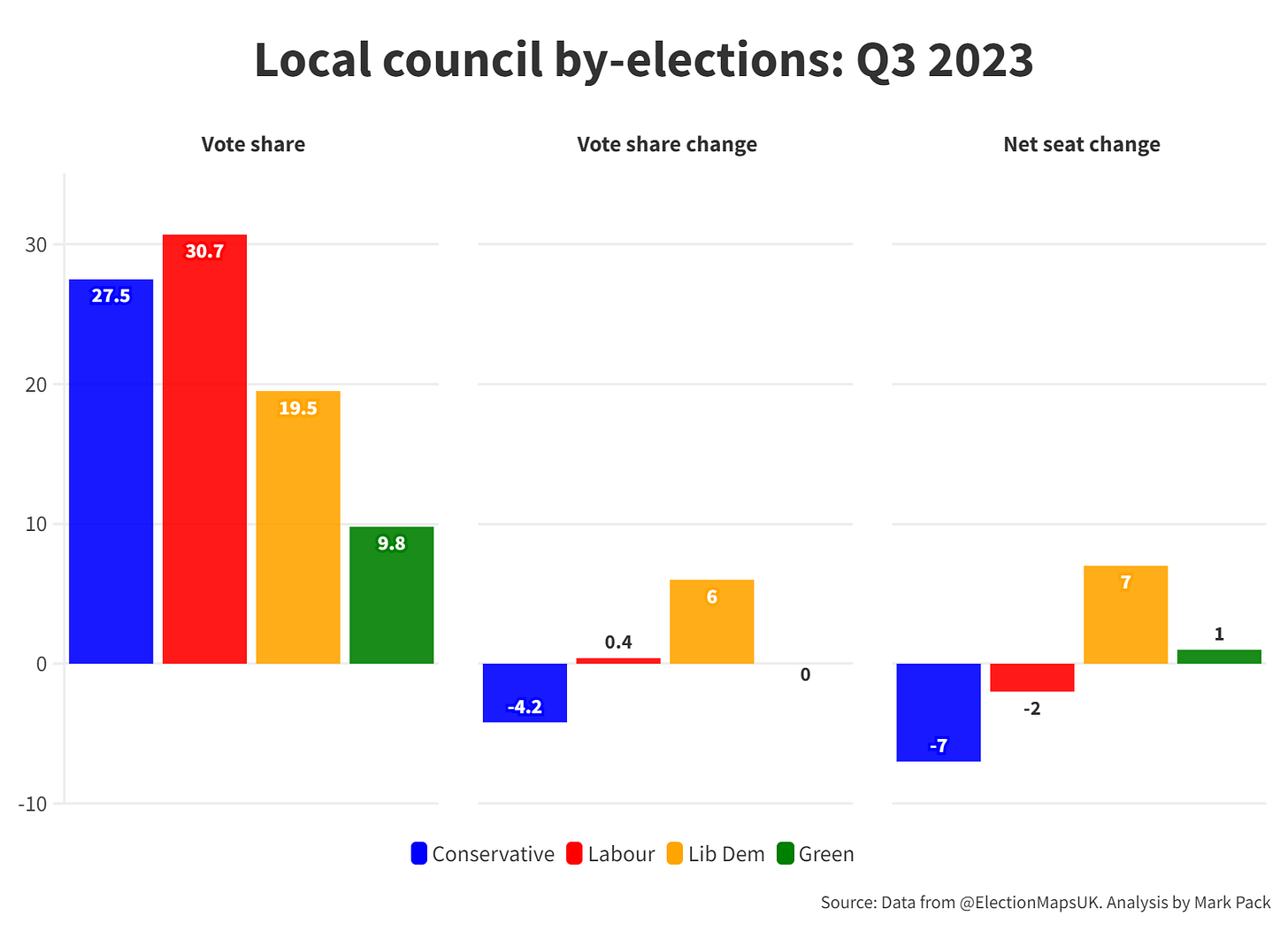

Council by-election results: what the voters are doing

Should pollsters stick to the truth in what they poll?

A few years back I worked through all of the options available with a pollster and the relevant regulator2 over a polling question that presented as if it were true a false statement about a Liberal Democrat. I was politely rebuffed all the way down the line. Putting a false claim to someone in a poll, without even telling them after that it was false, was considered reasonable, acceptable and ethical.

It was not a big enough deal for either the pollster or the regulator to be really put on the spot and set out in detail their justification, but there seemed to be two defences behind their rebuffs.

One, that it is acceptable - even desirable - for a legitimate poll to test out the public’s reaction to a false statement. After all, imagine how restrictive it would be to research the impact of fake news if ethical considerations prevented a genuine researcher ever doing this. As long as the sample is small enough - so it’s genuine research rather than push polling, designed to spread the false statement rather than to test it - then the argument goes that it’s ok.

The second argument is that even if it were wrong to poll false statements - or at least to add a disclaimer at the end of the poll to reveal their falsity - then it still isn’t reasonable to expect a pollster to be the arbiter of truth. Sure, it’s reasonable to expect a pollster to avoid questions that are so extreme as to, say, incite violence. But a pollster is an expert in sampling and question wording rather than an expert in all the subjects that their polls may cover.3 So how could you expect them to be able to put a truth test on all their questions?

Both defences have some merits. The overall situation rather resembles the dilemmas for those providing online services, such as social networks, hosting companies and internet traffic management firms. For the online world, I generally share the view that the closer the service is a core infrastructure, the less reasonable it is to put moderation requirements on the firm. Just as we don’t expect the Royal Mail - the provider of the core letter collection, distribution and delivery infrastructure - to be responsible for checking the content of every item they deliver for things like libel.

Which all came back to mind when there was a fuss this week over some polling from JL Partners.

As ever, let’s give a shout out to the culture of transparency in British polling,4 because we can better understand the fuss due to the relevant details all being published.

What was the fuss?

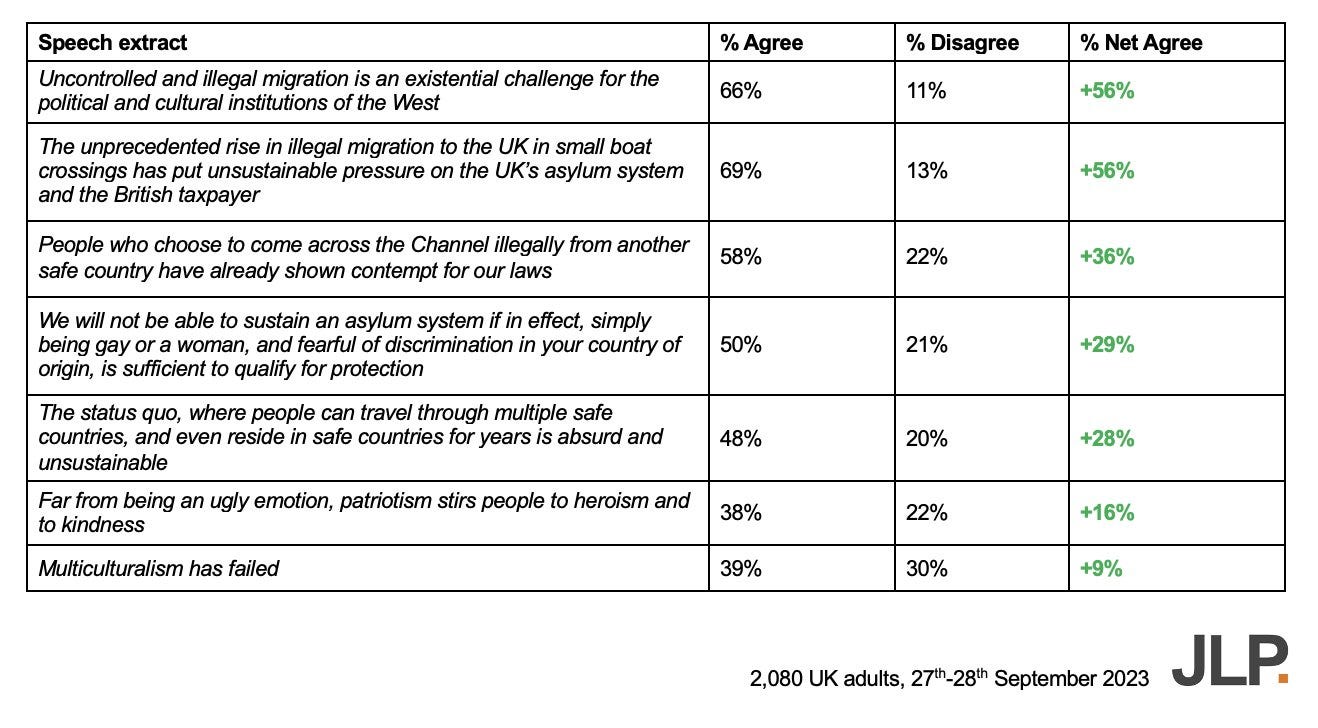

It was about this poll:

Some of those claims are, let’s say, somewhat controversial. So were they ok to poll? Social media, of course, provided some low-key, low-stress, consensus-seeking views on this:

The case for the defence is that all those statements polled were extracts from Home Secretary Suella Braverman’s Conservative Party conference speech. So although the poll figures produced plenty of criticism from people about loaded question wording, there is a decent defence to make. Isn’t it reasonable for a pollster to test out whether or not the public agree with what a high profile politician has said, regardless of how controversial or questionable that speech was?

Or as I put it:

That does though still leave two problems. One is about the poll design. As YouGov’s Patrick English commented as as Anthony Wells has been pointing out for decades, the agree/disagree format in such cases is problematic, including because of the way people skew towards agreeing with whatever they are asked about.

But also, the speech wasn’t given in a vacuum, as a one-off that no-one argues back over. The most insightful polling is to test out not just a speech on one side of a debate but speeches on both sides.

As ever, the best polling requires more polling.

Know other people interested in political polling?

Refer friends to sign-up to The Week in Polls too and you can get up to 6 months of free subscription to the paid-for version of this newsletter.

National voting intention polls

Once again, it a week without a poll putting the Conservatives on more than 30%, extending the run stretching back to late June (when a Savanta poll gave them 31%).

Here are the latest figures from each currently active pollster:

For more details and updates through the week, see my daily updated table here and for all the historic figures, including Parliamentary by-election polls, see PollBase.

Last week’s edition

Three polls in need of a second glance.

My privacy policy and related legal information is available here. Links to purchase books online are usually affiliate links which pay a commission for each sale. Please note that if you are subscribed to other email lists of mine, unsubscribing from this list will not automatically remove you from the other lists. If you wish to be removed from all lists, simply hit reply and let me know.

A different explanation of the Uxbridge by-election result, and other polling news

The following 10 findings from the most recent polls and analysis are for paying subscribers only, but you can sign up for a free trial to read them straight away.

Keep reading with a 7-day free trial

Subscribe to The Week in Polls to keep reading this post and get 7 days of free access to the full post archives.